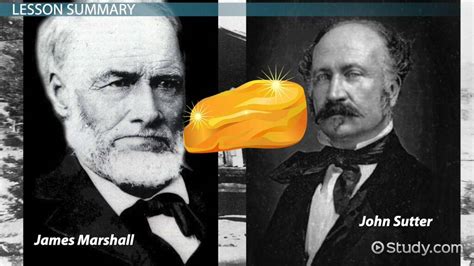

James W. Marshall and ChatGPT 3.5 – two names that may seem worlds apart, but are closer than you think. Let’s delve into a tale of gold, technology, and the rush they both ignited.

In January 1848, Marshall’s discovery of a gold nugget in California set off a frenzy that drew hundreds of thousands seeking fortune. Fast forward to November 2022, when ChatGPT 3.5 burst onto the scene, captivating users and tech investors alike with its AI capabilities.

Just as the California Gold Rush reshaped history, the AI boom ushered in a new era where Artificial Intelligence and Large Language Models (LLMs) became integral to daily life. The surge in AI adoption brought about transformative changes across industries, from enhancing productivity to revolutionizing customer experiences.

However, like every coin has two sides, the AI boom also exposed its darker facets – issues around copyrights, bias, ethics, privacy concerns, security threats, and potential job disruptions loomed large on the horizon.

EU Regulation on AI Ethics

Amidst these challenges came a ray of hope in the form of EU’s proposed AI Act aimed at addressing ethical and moral quandaries surrounding technology use. The move underscored the necessity for responsible AI integration within businesses.

Mark Molyneux EMEA CTO at Cohesity rightly points out that overlooking risks associated with AI adoption can lead to dire consequences akin to turning a blind eye to warning signs – it’s comparable to playing with fire without considering the repercussions.

Risks Associated with Unregulated AI Use

The risks stemming from unregulated AI use span from inadvertent data leaks by development teams to altering customer expectations regarding data usage by companies. The fallout could be catastrophic; case in point being Microsoft’s Tay incident where unchecked algorithms led to dissemination of offensive content.

According to Cohesity’s research findings reflecting consumer sentiments towards data privacy vis-à-vis unrestricted AI use raise red flags – an overwhelming majority expressed serious apprehensions about their data falling into wrong hands due to unchecked proliferation of AI technologies.

The Imperative Need for Internal Regulations

To navigate these turbulent waters safely requires companies’ internal vigilance through enforcing strict regulations governing access control mechanisms for AI deployment. This echoes past scenarios seen during cloud computing proliferation where lack of governance led firms down costly paths requiring reboots.

Leading corporations such as Amazon and JPMC have already enforced stringent controls on staff usage of ChatGPT until robust policies are in place indicating a shift towards proactive governance measures amidst impending regulatory frameworks.

Data Access Control & Transparency

One crucial aspect lies in delineating clear boundaries concerning data accessibility for internal AI projects ensuring compliance with legal constraints while upholding data sovereignty mandates through role-based access protocols as safeguards against potential misuse or breaches.

Leave feedback about this